The Latent Value of Dormant Drawing Data

In the warehouses of the AEC (Architecture, Engineering, and Construction) industry lie vast amounts of 2D CAD drawings accumulated over decades. Most of these valuable assets exist as PDFs, making them difficult to integrate into modern digital workflows like Building Information Modeling (BIM).

Until now, the only way to digitize these was through manual labor by having a person look at a drawing and recreate it as a 3D model. This process is notoriously inefficient, costly, and time-consuming. But what if AI could automatically read, understand, and convert this legacy data into digital assets? A powerful answer to this question comes from researchers at the Technical University of Munich (TUM) with their innovative AI technology: VectorGraphNet (Graph Attention Networks for Accurate Segmentation of Complex Technical Drawings, October 2024).

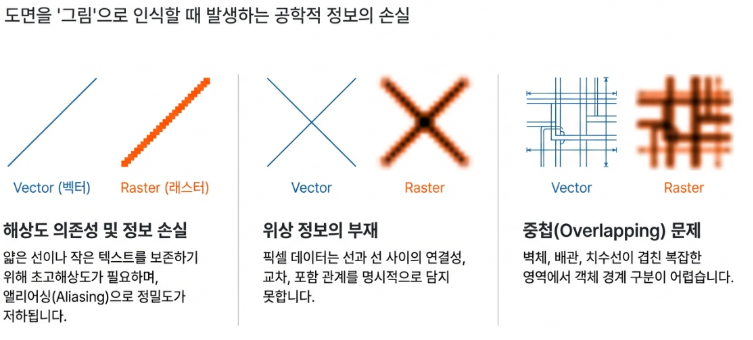

Why Raster is Not the Answer

Traditional CAD recognition has largely relied on “Rasterization”, which is the process of converting vector data into a collection of pixels. However, this approach fails to capture the essence of engineering drawings due to several critical limitations:

- Resolution Dependency & Information Loss: Representing thin lines or tiny symbols in large-scale drawings requires extremely high resolution. This leads to massive memory overhead and “aliasing” (distortion), which compromises the sub-millimeter precision required in engineering.

- Lack of Topological Information: Pixels are just sequences of colored dots. Vital relationships between objects, such as whether two lines “meet” or “intersect,” are lost. The AI is left with the difficult task of inferring these connections solely from pixel patterns.

- Overlapping & Density Issues: In complex drawings where layers of walls, plumbing, electricity, and dimension lines overlap, pixel-based methods struggle to distinguish clear boundaries between objects.

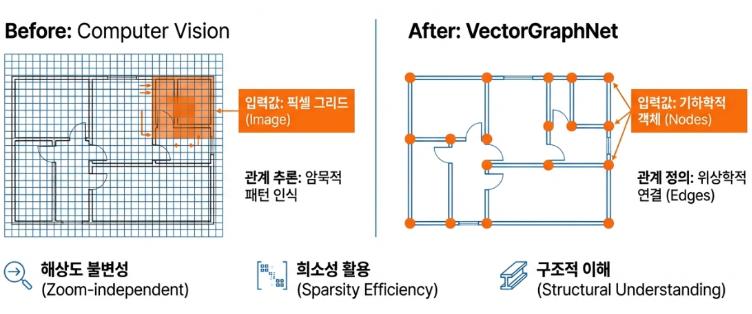

A Paradigm Shift: “CAD Drawings are Inherently Graphs”

VectorGraphNet presents a revolutionary shift: “From Pixels to Graphs.” Its core insight is to treat a CAD drawing not as an image, but as a network of objects and relationships, a Graph.

- Node: Every geometric object (lines, arcs, circles) becomes a ‘point’ or node.

- Edge: The spatial and geometric relationships (e.g., meeting, parallel) become ‘lines’ or edges connecting the nodes.

By bypassing rasterization, this graph-based approach is Resolution-Independent and preserves the structural essence of the drawing, enabling far more efficient and accurate analysis.

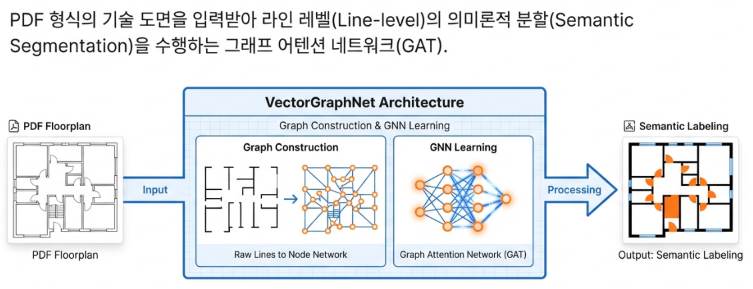

Overview of VectorGraphNet

VectorGraphNet is a deep learning framework developed by researchers at the Technical University of Munich (TUM). This technology performs semantic segmentation by training directly on the raw vector data of CAD drawings using Graph Neural Networks (GNN). This allows the system to classify the specific meaning of each object within a drawing, such as identifying walls, doors, or windows. In essence, it is an innovative solution that enables machines to understand the semantic meaning of drawings just as humans do.

As a reference, a Graph Neural Network is a type of neural network designed to understand relationships. Rather than simply looking at individual data points, it learns from the connections (nodes and edges) between data to identify underlying patterns. Because of this capability, GNNs are widely used in social network analysis, molecular structure prediction, and recommendation systems.

How VectorGraphNet Works: A 3-Step Process

The overall pipeline of VectorGraphNet consists of three stages: refining the data, defining the relationships, and training the model.

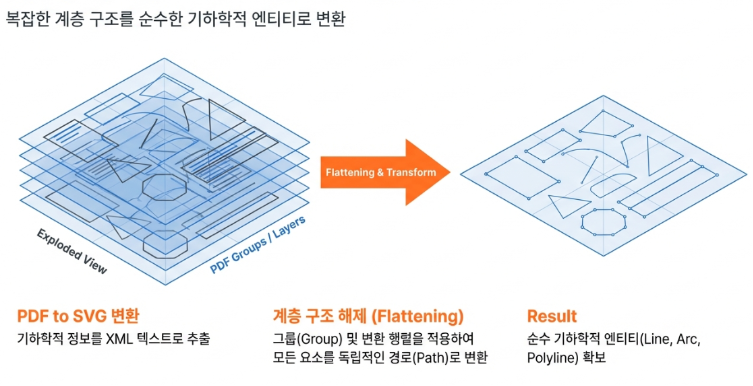

Step 1: Data Preparation (Refining PDFs into SVG)

First, unstructured PDF drawings, which are difficult for machines to process, are converted into SVG (Scalable Vector Graphics) format. Subsequently, any complex groupings or transformation matrices within the SVG are unraveled through a process called “Hierarchy Flattening.” This transforms every geometric object into an independent path on a single coordinate system, ensuring the AI receives only pure and refined geometric data as input.

As a reference, SVG is a widely used image representation format on the web. As the name suggests, it refers to vector graphics that do not lose quality when resized. This makes it a standard format for displaying sharp and flexible graphics, particularly effective for logos, icons, charts, and animations that undergo frequent scaling.

Step 2: Feature-Centric Graph Construction

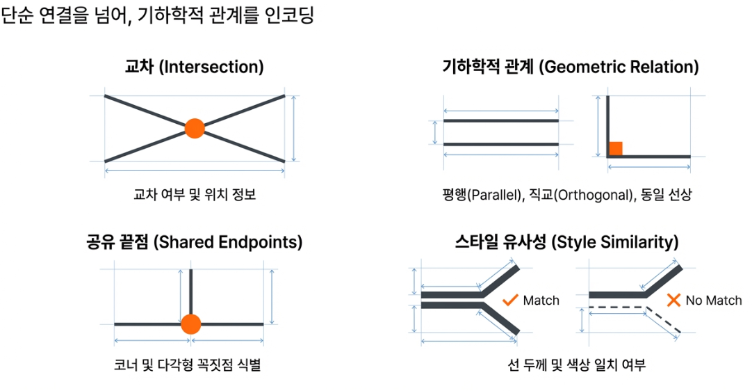

This stage is the core of VectorGraphNet. Each geometric object (SVG path) is transformed into an information-rich “Node,” and the relationships between them are defined as “Edges.”

- Node Representation: Each node contains multi-dimensional information beyond simple coordinates.

– Geometric Attributes: Shape characteristics such as length, curvature, and area. For example, curvature is a decisive factor in distinguishing the trajectory of a door (an arc) from a wall (a line).

– Style Attributes: Visual features like line weight and color, which carry significant meaning in drafting standards. For instance, line weight can indicate an object’s importance or whether it represents a cross-section.

– Topological Attributes: Information on whether an object is a closed shape (like a column) or an open line (like a wall centerline). - Edge Generation: Edges defining the relationships between nodes are also more than just connection lines. They are assigned rich geometric relationship features such as intersections, parallel/orthogonal status, shared endpoints, and style similarities. Directly embedding these types of relationships into the edges is a key advancement over traditional models that only consider proximity. This allows the network to deeply learn the “Engineering Grammar” of the drawing.

Constructing the data as a graph enables a high-level understanding of the drawing by revealing the intricate relationships between its geometric objects.

Step 3: Learning via Graph Attention Networks (GAT)

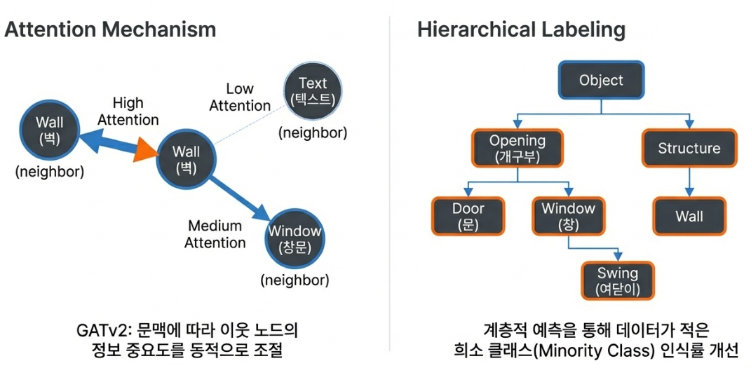

The constructed graph is trained using a Graph Attention Network (GAT), which employs an “attention mechanism.” Attention refers to the ability to focus on important information, a concept that plays a central role in the Transformers underlying generative AI. For example, when the AI analyzes a ‘Wall’ node, it assigns higher weights to neighboring ‘Wall’ or ‘Window’ nodes while learning to ignore relatively irrelevant ‘Text’ nodes.

Furthermore, a “Hierarchical Labeling” technique is used to understand objects across multiple layers. Instead of simply classifying an object as a ‘Door,’ it simultaneously predicts the upper category ‘Opening’ and sub-attributes like ‘Swing Door.’ This architectural choice plays a decisive role in dramatically increasing the recognition rate of rare objects that are typically difficult to learn, which is the secret behind VectorGraphNet’s superior Weighted F1 Score.

For reference, the Weighted F1 Score is a technique used in multi-class classification where the F1 score for each class is calculated and then averaged proportionally to the number of data points in each class. In simpler terms, it is an average F1 score where classes with more data hold more weight. It is frequently used to evaluate a model’s overall performance on imbalanced datasets. While it provides a realistic performance assessment, it can sometimes overshadow the performance of smaller classes. VectorGraphNet resolves this issue by employing the hierarchical labeling technique mentioned above.

Remarkable Performance: Lightweight yet Powerful

The performance of VectorGraphNet can be summarized by three core advantages:

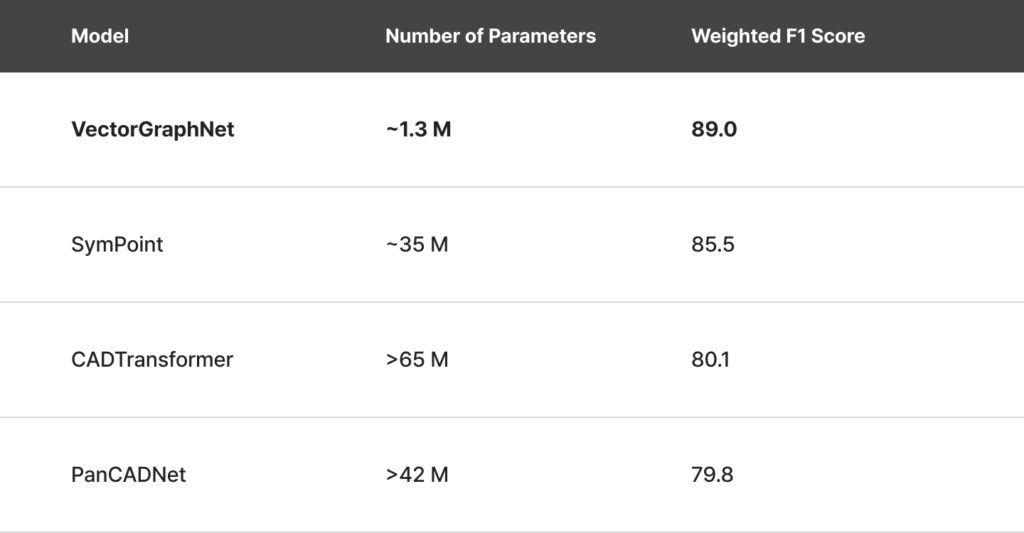

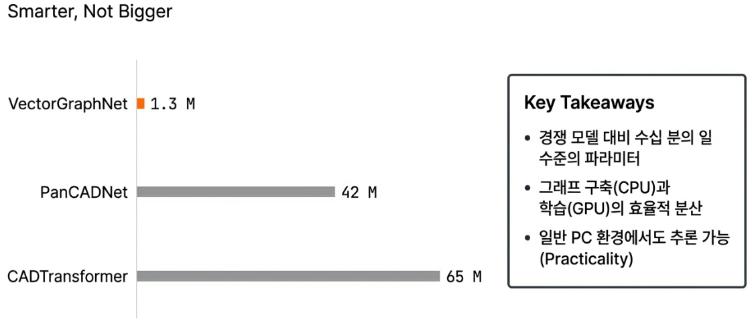

- Overwhelming Computational Efficiency

The number of parameters determining the model size of VectorGraphNet is only about 1.3 million (1.3M). This is a mere fraction of competitor models such as PanCADNet (over 42M) or CADTransformer (over 65M). This remarkable efficiency enables high-speed inference even in environments without high-end GPUs. - Quantitative Performance: Outperforming Competitors

It is not just lightweight; its performance is top-tier.

VectorGraphNet recorded a Weighted F1 Score of 89.0 on datasets with significant class imbalance, outperforming its competitors despite having the fewest parameters. The significance is even clearer when compared to its strongest rival, SymPoint. While SymPoint shows strength in recognizing major classes with abundant data (like walls), VectorGraphNet takes the lead in the Weighted F1 Score. This demonstrates the difference between a model that only excels at “common things” and one that performs “consistently across all things,” proving that VectorGraphNet is a more reliable real-world solution that doesn’t miss rare but critical objects like fire hydrants or special symbols.

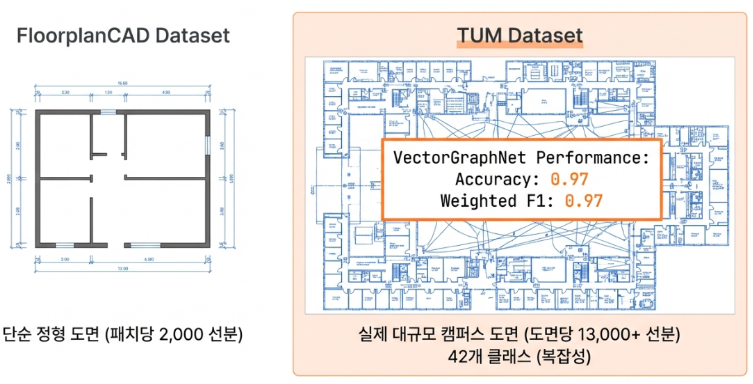

Robustness in Real-World Datasets

VectorGraphNet demonstrated overwhelming performance on the TUM Dataset, which consists of complex and imbalanced real-world drawings from a university campus. It achieved an accuracy of 0.97 and a Weighted F1 Score of 0.97. This serves as clear evidence that as drawings become larger and more complex, a graph-based approach that learns structural integrity is far more powerful than pixel-based methods.

Changing the Future: Accelerating Digital Transformation in the AEC Industry

VectorGraphNet holds the potential to bring about innovative changes in the AEC (Architecture, Engineering, and Construction) industry:

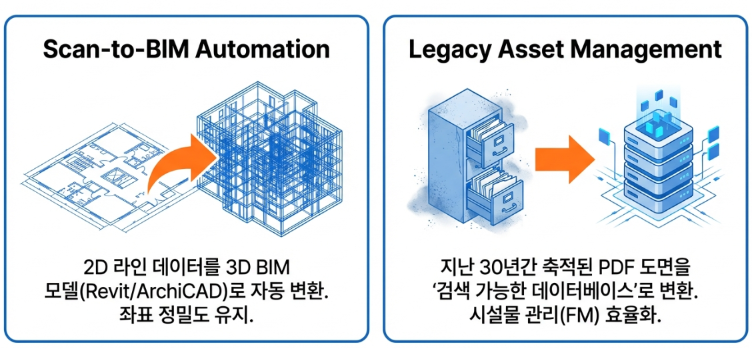

- BIM Automation: The 2D drawing objects precisely segmented by VectorGraphNet can be used directly to generate 3D BIM models automatically. Since coordinate precision is maintained, accurate BIM models can be constructed rapidly without the need for manual corrections.

- Assetization of Legacy Data: Countless historical PDF drawings that were previously stagnant can be converted into searchable and analyzable digital databases. This will dramatically reduce the time required to identify existing building information for facility management, remodeling, and expansion projects.

Considerations and Future Challenges

As with all technologies, VectorGraphNet has its own set of limitations and challenges.

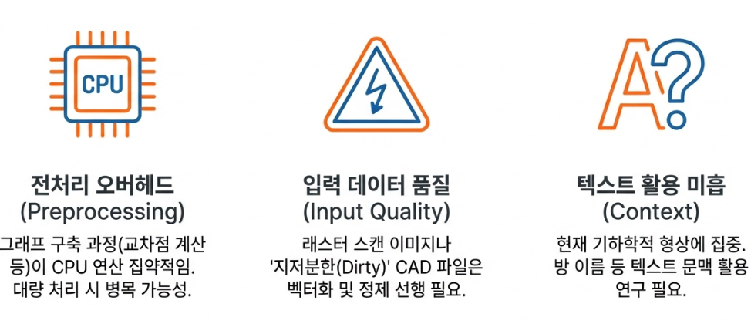

- Preprocessing Overhead: While the neural network model itself is lightweight, the preprocessing required to construct a graph from a drawing is CPU-intensive. This can become a bottleneck in the overall pipeline.

- Dependency on Input Data Quality: The “Garbage In, Garbage Out” principle applies. Performance may degrade if “noisy” CAD files, such as scanned images or those with broken lines, are used as input.

- Absence of Textual Information Utilization: The current architecture focuses primarily on geometric forms. It does not yet actively utilize textual information within drawings, such as room names or dimensions. This remains a key area for future improvement.

Toward a New Standard for CAD Recognition

VectorGraphNet is an innovative technology that successfully achieves both efficiency and precision in the field of CAD drawing recognition. Although some challenges like preprocessing overhead remain, its ability to deliver peak performance on complex, imbalanced real-world drawings using an ultra-lightweight model demonstrates its immense potential. By moving beyond the outdated pixel-based paradigm and understanding the structural essence of drawings, VectorGraphNet is well-qualified to become the new standard for accelerating digital transformation in the AEC industry.