From Talking AI to Acting AI: The Backbone Lies in Infrastructure

No matter how brilliant a brain is, what use is it if the hands and feet are tied? To evolve beyond a simple chatbot into an AI Agent that performs tasks independently, a robust Connectivity Infrastructure is as essential as the model’s intelligence. This infrastructure links the AI to the outside world, enabling it to call APIs, pass complex authentication (Auth), and execute tools. These processes ultimately determine the system’s performance and its potential for infinite scalability.

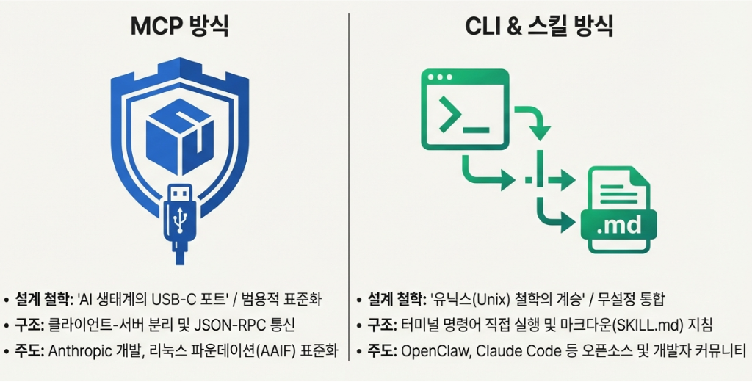

Interestingly, the methods for giving an agent its hands and feet are currently split into two completely different paths. One is the venerable CLI (Command Line Interface), which has sustained the IT ecosystem for decades since the 1970s. The other is the ambitious new standard MCP (Model Context Protocol), designed specifically for the AI era.

Between these two architectures, which span over half a century, which approach is better suited for our agent systems? Let us explore the unique appeal and practical value of both technologies to find the answer for optimal design.

“MCP(Model Context Protocol) Approach: A New Standard for Agents”

The Limits of Unstandardized Connectivity: Fragmentation Blocking AI Integration (The N×M Problem)

No matter how smart an AI is, if it cannot access internal company data, it remains nothing more than a book smart assistant. When attempting to connect AI with internal systems via APIs, we immediately face a grim reality. For instance, to connect 3 AI models to 5 internal systems such as databases or messengers, one must develop 15 (3×5) individual pieces of custom code. Every time a new tool is introduced, the workload increases exponentially. This creates uncontrollable fragmentation (the N×M problem), much like the frustration of dealing with different power outlet standards for every country, where you must carry a specific adapter for every single device you plug in.

The Emergence of a Universal USB-C Port for the AI Ecosystem

The Model Context Protocol (MCP) introduced by Anthropic is what saves us from this swamp of spaghetti code. It was a declaration to stop building separate lines for every AI model and system and instead create a single standard used by the entire world. Now, all that is needed is to install a sturdy standard power strip called MCP at the center of the system. Whether a new AI model or a database is added, they can communicate instantly just by clicking them into the established standard. Having established itself as a global open standard (AAIF), MCP has become the massive artery unifying the AI agent ecosystem.

How exactly does this massive artery, or standard power strip, work? The key lies in packaging complex functions into standardized boxes. By placing existing complex programs into standardized boxes called MCP Servers, AI agents can communicate freely with them through a single unified format. This grants the agent three powerful weapons.

- Tools: Actions the agent can execute directly, such as searching a database or sending an email.

- Resources: Knowledge like internal documents or data that the agent can refer to.

- Prompts: Work guidelines that keep the agent on track and prevent it from getting lost.

Why Enterprises Are Enthusiastic About MCP: Ironclad Security

When such a powerful weapon to freely handle diverse systems is placed in the hands of an AI agent, a significant concern inevitably follows. It is a fundamental question of trust: “Is it truly safe to allow this AI direct access to our company’s core databases or systems?” This is precisely where the true value of MCP shines. The decisive reason why so many enterprises are adopting MCP as their standard infrastructure, despite it being a new technology, lies in Security and Isolation.

In an MCP environment, each tool runs in an independent room (process) that is completely blocked off from others. Even if a problem arises in a specific tool due to an external attack or an error, the system structurally and perfectly prevents it from spreading to the entire infrastructure. Furthermore, for dangerous and critical tasks such as deleting files or making payments, safety measures can be implemented to ensure they must go through final human approval. This provides enterprises with the peace of mind they need to deploy AI at scale.

The Fatal Achilles’ Heel of the Seemingly Perfect MCP

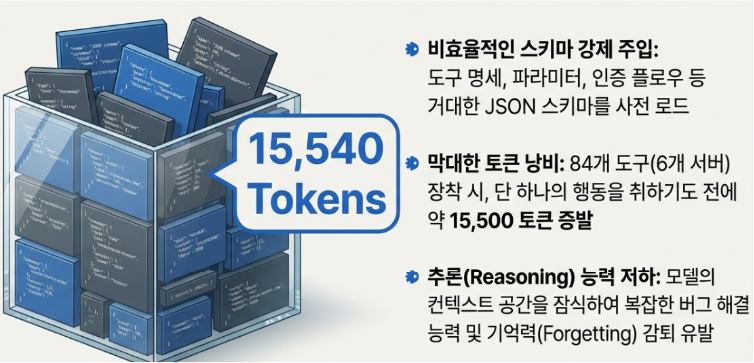

Despite possessing such powerful universality and security, there are very distinct technical limitations encountered in actual production environments. The most representative issue is the so called Context Bloat phenomenon.

To prevent agent malfunctions, MCP defines tool input parameters and constraints using very strict and detailed JSON schemas (instruction manuals). The core of the problem is that the entire, extensively written specification for all these tools must be preemptively injected into the Context Window before the agent even begins its task. To use an analogy, it is like a new employee (AI) being forced to memorize the thick instruction manuals for dozens of machines in the office the moment they arrive at work, without even knowing which tasks they will be assigned.

In practice, connecting just 6 servers with approximately 84 tools causes over 15,500 tokens to evaporate solely on internalizing these manuals before receiving the first instruction. In large scale enterprise environments, this can consume 50,000 to 100,000 tokens. This robs the model of its core Reasoning space, degrading its intelligence and leading to a massive API cost bomb.

Additionally, there is a heavy implementation overhead known as the Wrapper Tax. To align existing APIs with this new universal standard, one must undergo the cumbersome hosting task of rewriting interfaces and wrapping them in new packaging called an MCP Server.

2026: An Ecosystem Evolving Beyond Limitations

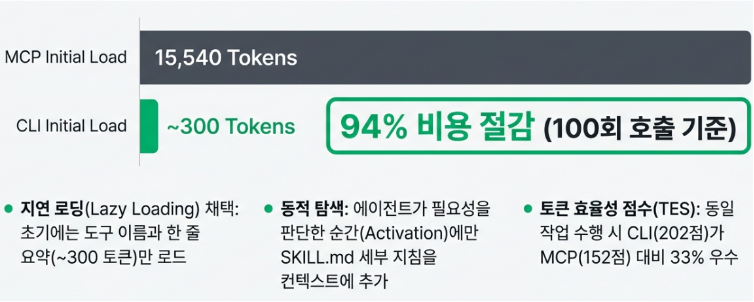

Fortunately, these shortcomings are being rapidly overcome. Instead of injecting thick manuals from the start, the introduction of Lazy Loading technology which retrieves instructions only when necessary has drastically reduced data waste by up to 95%. Furthermore, the ecosystem is evolving beyond the frustration of exchanging only text, adding features that allow AI to directly display visual interfaces (UI) such as dashboards or buttons in the chat window.

“The CLI (Command Line Interface) Approach: A Proven Universal Interface”

Developers weary of heavy JSON schemas and the wrapper tax are turning their eyes back to the oldest and most proven interface in software history. This is the rugged black and white screen, the Command Line Interface (CLI), and the Skill architecture. Let us explore why next generation AI agents are returning to the 50 year old Unix philosophy instead of relying solely on the latest protocols.

The Agent’s Mother Tongue: Zero Wrapper Tax

Large Language Models (LLMs) have already learned vast amounts of shell scripts and technical documentation during their training. In other words, commands like git, docker, and aws are the Mother Tongue for agents, requiring no translation. Without the need to create complex intermediary servers or packaging like MCP, simply providing an empty terminal window instantly turns tens of thousands of global CLI tools into the agent’s arms and legs.

Context Diet and Progressive Disclosure

The CLI approach does not force the injection of all manuals from the start. Upon beginning a task, it lightly scans only the skill names and short summaries in a SKILL.md file, consuming about 90 tokens. Detailed instructions or the –help command are read dynamically only at the exact moment a specific tool is truly needed. Thanks to this Progressive Disclosure, while MCP wastes 15,500 tokens for 84 tools, CLI operates with just 300 tokens. This 95% saving in brain capacity can be devoted entirely to Reasoning.

The Triumph of Unix Philosophy and Composability

When analyzing large log files, MCP often causes bottlenecks by pouring massive chunks of text entirely into the AI’s memory. In contrast, a CLI agent utilizes the power of Pipes (|).

kubectl get pods | grep “api” | awk ‘{print $1}’

By filtering unnecessary data within the terminal first and returning only the refined core results as context, the CLI approach prevents cognitive overload for the AI and dramatically reduces costs.

An Exploding Ecosystem and the Fatal Achilles’ Heel

Based on this superiority, CLI based workflows such as OpenClaw and Claude Code CLI are currently leading the development ecosystem. However, this powerful freedom comes with the shadow of Security Vulnerabilities. Unlike the strictly isolated MCP, a CLI agent executes commands directly with the permissions of the host computer. Attacks involving data theft through malicious skills, such as ClawHavoc, have painfully demonstrated these risks. Therefore, a Sandbox that physically confines dangerous commands like rm -rf is an absolute necessity for implementing CLI agents in practical work. Dedicated MicroVM platforms like E2B and Modal have now established themselves as essential safety mechanisms.

The Disposable Bulletproof Lab: MicroVMs Stopping AI’s Dangerous Run

The commands issued by an agent in a CLI environment are so free that they can sometimes be fatal. Platforms like E2B and Modal, which emerged to control this, differ from the heavy Virtual Machines (VMs) or standard Docker containers we typically know. They are MicroVMs, ultra lightweight sandboxes stripped down to the extreme solely for the purpose of executing an AI agent’s code.

To use an analogy, it is like a temporary lab surrounded by bulletproof glass that can be used once and then ruthlessly discarded.

While starting a typical virtual machine takes tens of seconds to several minutes, a MicroVM provided by E2B or Modal is created in just a few milliseconds at the exact moment the agent attempts to execute code. Within this perfectly isolated temporary environment, the agent can freely create files, download external packages, or even execute suspicious malicious code.

However, the moment the task is successfully completed or the agent commits a fatal error like rm -rf, the entire lab is immediately destroyed without leaving a single speck of dust on the main host system. Ultimately, the decisive reason why the CLI architecture, once considered the most vulnerable in terms of security, could be proudly adopted in enterprise production environments is the emergence of these powerful MicroVM platforms that safely confine and extinguish all unexpected actions of an agent within 0.1 seconds.

” CLI vs MCP: A Comparative Analysis of Core Characteristics “

As we have explored, the methods for providing “hands and feet” to an Acting AI Agent to connect it with the outside world are divided into two major philosophies. Here is a summary of their characteristics.

- MCP (Model Context Protocol): This is a structure that encapsulates every tool within an independent box. Its greatest weapon is Ironclad Security, protecting the host system through powerful process isolation. On the other hand, its weaknesses include Context Bloat, where the agent is forced to memorize tens of thousands of tokens of schemas before starting a task, and the development overhead required to create dedicated servers.

- CLI & Skill: This is a structure where the agent issues commands directly within a terminal. Its greatest weapon is Overwhelming Token Efficiency, as it retrieves instructions only when necessary, combined with zero integration costs. Conversely, it suffers from Security Vulnerabilities due to the direct execution of commands with local permissions, making the establishment of a sandbox infrastructure an absolute necessity.

Architectural Comparison at a Glance

The Perfect Harmony: Culinary Ingredients (MCP) and Recipe Cards (Skill)

Who is the final winner? The answer is Both. Future AI agent systems do not pit these two approaches against each other as exclusive competitors.

From an architectural perspective, MCP represents the Culinary Ingredients (Tools) securely provided to the agent. Meanwhile, Skills (CLI) are like the Recipe Cards (Workflow) that instruct the agent on when and how to combine those ingredients to cook.

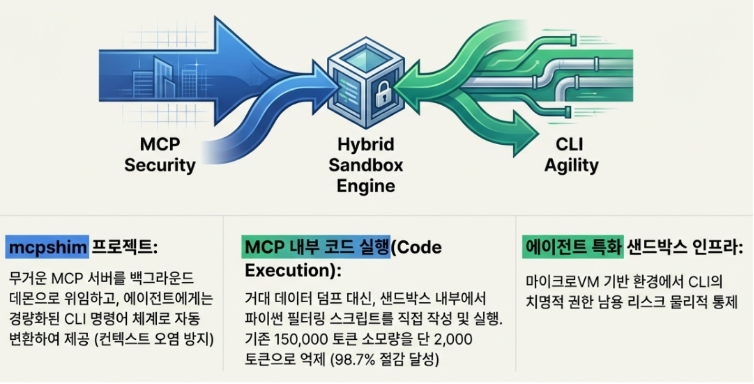

The new standard for enterprise AI integration in 2026 is solidifying into a hybrid architecture that fuses these two. Sensitive corporate data is supplied under ironclad security through an MCP Gateway, while the agent’s logic execution is controlled through the light and agile terminal environment of Skills (CLI). Ultimately, a sophisticated design that places an iron wall (MCP) where protection is needed and grants infinite freedom (CLI) where speed is required will unlock 100% of an agent’s potential.

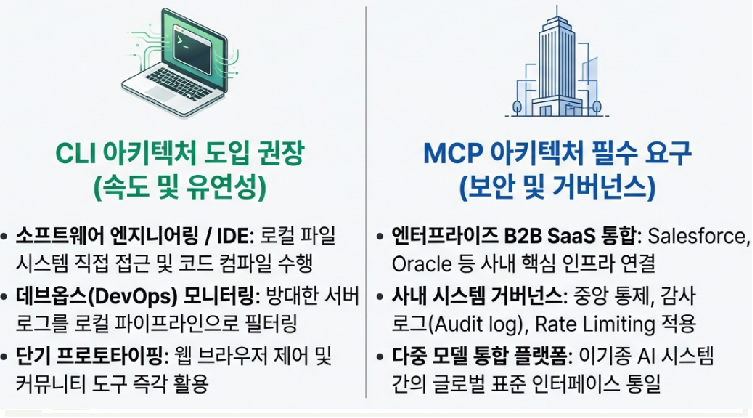

“Optimal Selection Guide: Solving the Triangle of Control, Speed, and Security”

Choosing how to implement an agent is more than a simple selection of a tech stack; it is a fierce architectural decision regarding the balance between Control, Execution Speed, and Security. Interestingly, this decision also dictates which specific AI model will serve as the agent’s brain.

- If universal control and speed are vital (Recommend CLI): This is overwhelmingly advantageous if the goal is direct control of internal legacy infrastructure, automation of complex system management, or rapid prototyping. In this case, language models highly specialized in code generation and system command execution are the best partners. They deliver unparalleled performance in pure technical refactoring and logic implementation.

- If structured precision is required (Recommend MCP): This is suitable for environments requiring strict standards and control, such as customer facing SaaS agents or standardized tool sharing between internal teams. Models optimized for complex API integration and Tool Use capabilities are the perfect match. They show unique strength in precise and secure external system control.

However, the completion of a true enterprise agent lies in the Triangular Structure. A highly mature system will eventually take a perfect hybrid form: connecting securely to external data via MCP, executing local infrastructure via CLI, and orchestrating the entire process through Skills.

” Future Outlook and Technology Trends: The Era of Grand Unification and Secure Freedom “

The following three technology trends are essential to watch for future system design.

- The Grand Unification of Standardized Governance: Beyond Vendor Lock in:

The competition between fragmented standards from various Big Tech companies has ended. The A2A protocol led by Google and IBM’s ACP have merged and transitioned to the AAIF (Agentic AI Foundation) under the Linux Foundation. MCP has also been integrated into this ecosystem, solidifying the unified infrastructure as a completely vendor neutral global open standard. - Internalization of Security Infrastructure and Convergence of Sandboxing Technology

To control the powerful permissions of the CLI, execution environment isolation has become an essential survival tool. Currently, Anthropic utilizes bubblewrap for terminals and gVisor for web environments, while Vercel has fully internalized Firecracker microVMs into the agent runtime. The Allowlist Based Proxy pattern, where agents communicate only through approved paths, is now established as the security standard for all implementations. - Competition for Extreme Performance Optimization through Context Dieting

In the era of hybrid architecture, the core competitive advantage is Lightness. New optimization technologies like UTCP (Universal Token Context Protocol) designed to overcome MCP overhead, along with skill based Progressive Disclosure strategies, will determine the winners in terms of API costs and processing speeds.

In conclusion, the future AI agent infrastructure we will encounter will run on a Single Global Standard (AAIF) that unifies previously fragmented specifications. Within that backbone, ironclad sandboxing will be internalized like DNA. This ensures that the infinite freedom of the CLI and the powerful connectivity of MCP are finally perfected on the solid foundation of Safety.

Comments

Posts that violate our policies may be removed without prior notice.