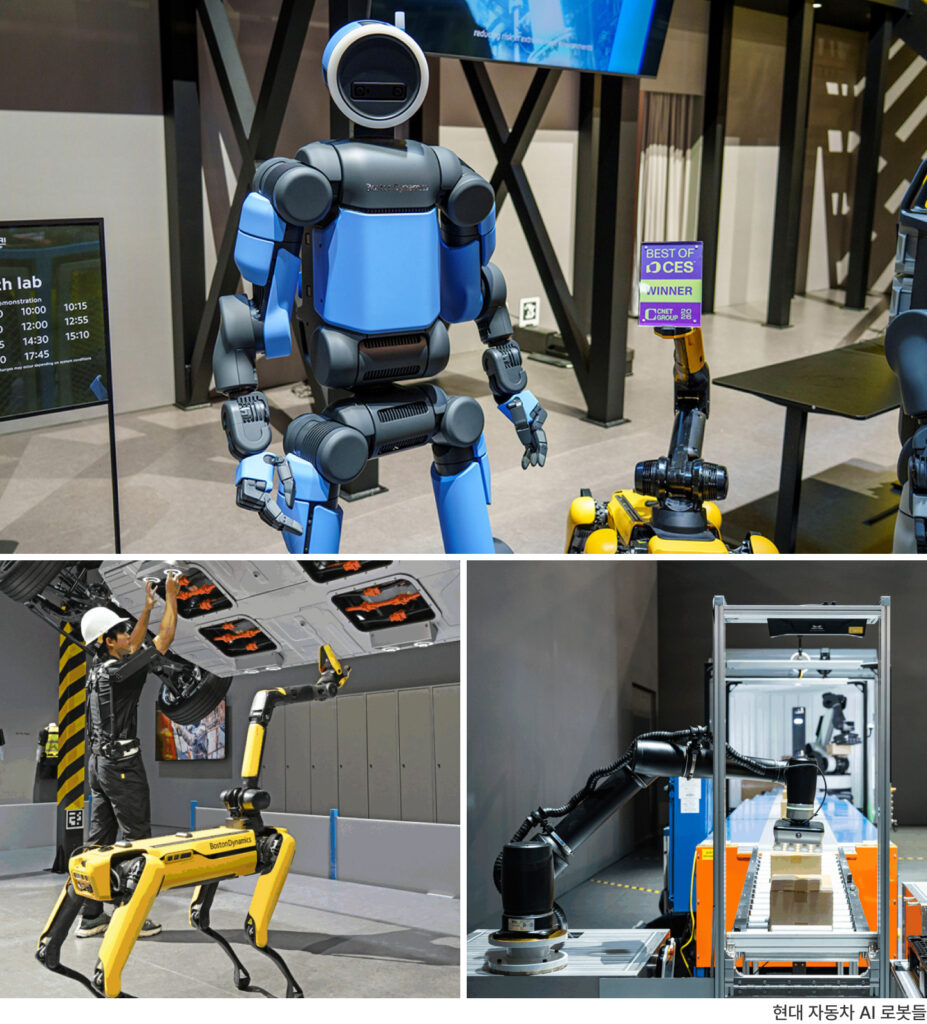

If 2025 was the year Generative AI and software agents proved their value as business models, CES 2026 marked a massive turning point where that intelligence officially expanded into the Physical World.

This year’s show floor was not filled with chatbots talking from behind screens, but with robots that actually walk, carry, and work. This signals that ‘Physical AI’ is no longer a distant vision of the future, but a reality that has arrived in our daily lives.

This report analyzes the core trends that permeated CES 2026, focusing on three technological pillars: Action, Physics, and Self-Learning.

1. The Evolution of Action: From Simple ‘Tools’ to Collaborative ‘Colleagues’

The biggest shift witnessed at CES 2026 is the attitude of AI. While AI has previously been a “command executor” that answers human questions, it has now completely transformed into an “active partner” that perceives situations on its own and proposes optimal alternatives.

‘Active Agents Leading the Smart Home’

The undisputed key phrase resonating through the Home Appliances and Smart Home pavilions was ‘Active Agent.’ The smart home scenarios presented by global leaders like Samsung and LG went a step beyond expectations. Not only were individual appliances like TVs, refrigerators, and robot vacuums organically connected, but they also moved together as a single team with AI home robots, creating an impressive spectacle.

The smart homes we’ve experienced so far were essentially close to being “smart remote controls”—reacting only when a user launched an app or gave a voice command. However, the smart home appliances unveiled for 2026 autonomously identify and manage air quality, lighting levels, and lifestyle patterns within the home. The ability to actively control the environment itself before a user gives instructions was naturally implemented. This clearly demonstrated that future home appliances will not stop at being “devices performing functions” but will evolve into intelligent operating systems that understand the entire space of the home and act first.

‘The Agentification of Factories and Logistics’

In the Industrial Pavilion, rather than the idealistic slogan of “fully unmanned factories,” the focus was on AI as a practical “collaborative partner.” Factories are now transforming from places where machines simply run into single, massive organisms orchestrated by AI. Robot arms, autonomous carts, and sensor networks operate on an integrated AI platform, where AI has taken on the role of a digital manager operating the entire factory, rather than just being a machine moving according to a set scenario.

While past automation repeated designated orbits, the intelligent factory of 2026 can detect minute vibrations in equipment to predict failures, and if a bottleneck is anticipated, it can immediately redesign logistics routes or modify process parameters on its own. This indicates that AI on the manufacturing floor has moved completely beyond the stage of “simple automation” as a human assistant and into the stage of “Autonomous Operation,” making judgments and deriving optimal results on its own.

2. Understanding the Real World (Physics): World Models and Sim-to-Real

Another striking point at CES 2026 was the way AI understands the physical world—its “real-world understanding capability.” This is an essential process for AI to step outside the screen and move safely.

“Learning in Virtual, Applying in Real”: The Universalization of Sim-to-Real“

The humanoid and service robot exhibitions were truly a arena for Sim-to-Real (learning in virtual space, then applying to reality) technology. In particular, physical simulation platforms like NVIDIA Isaac Sim and Omniverse emerged as core infrastructure. After learning gravity and friction by falling tens of thousands of times in virtual space, robots were deployed to the field with those control policies transplanted into actual hardware. The natural demonstrations of climbing stairs and carrying objects in front of visitors signaled that “virtual learning reflecting laws of physics” has become the standard for next-generation robot intelligence.

The Evolution of Autonomous Driving: Bridging the ‘End-to-End’ and ‘Sim-to-Real’ Gap

The biggest topic in the CES Mobility Zone was undeniably the technological clash between NVIDIA’s unveiled autonomous driving foundation model, ‘Alpamayo,’ and the existing powerhouse, Tesla’s FSD. The two companies are taking polar opposite approaches toward the same goal of “full autonomous driving,” and the core issue lay in how to overcome the Sim-to-Real Gap (the discrepancy between virtual and reality).

- Tesla: “Reality Itself is the Simulator” (End-to-End Neural Net)

Tesla adheres to an End-to-End approach, where actual driving footage (video) collected from millions of vehicles is learned entirely by the neural network.

- Strength: It quickly processes subtle light reflections of reality or unstructured road situations intuitively, like “instinct.” Since it uses real data, the Sim-to-Real Gap itself does not exist.

- Limitation: There is the ‘Black Box’ problem where it cannot be explained why the AI stopped, and it is vulnerable to responding to rare situations (Long-tail) where data is scarce.

- Strength: It quickly processes subtle light reflections of reality or unstructured road situations intuitively, like “instinct.” Since it uses real data, the Sim-to-Real Gap itself does not exist.

- NVIDIA Alpamayo: “AI That Explains the Reason” (VLA + Sim-to-Real)

In contrast, NVIDIA’s Alpamayo introduced a VLA (Vision-Language-Action) model that interprets visual information into language and then acts, equipping it with “reasoning capability” that can explain the reason for driving. - Sim-to-Real Strategy: While Tesla fills 99% with real data, NVIDIA generates and learns accident data or extreme situations (Long-tail), which are hard to obtain in reality, within Omniverse simulations.

3. Independence of Learning (Self-Learning): Synthetic Data and Quality Control

“”If there isn’t enough data, we create it and use it.”

CES 2026 clearly showed the paradigm shift in AI learning data.”

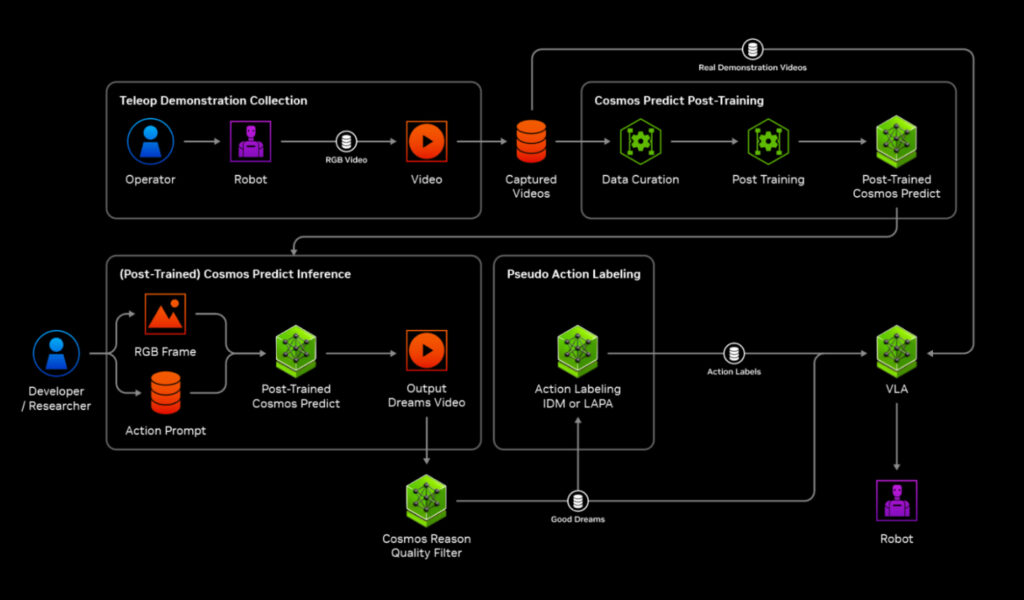

Synthetic Data Factories Creating Rare Situations

In the AI Infrastructure Zone, Synthetic Data generation platforms for robotics and autonomous driving were mainstream. Just as it is difficult to collect collision accident data on actual roads, it is nearly impossible to gather data while directly experiencing fatal errors in industrial fields or extreme, rare situations (Corner Cases) in reality.

A prime example solving this problem is NVIDIA’s robot development platform, ‘Isaac.’ At this CES, NVIDIA demonstrated the process of infinitely generating scenarios within virtual space that are dangerous to recreate in reality—such as a robot slipping on oil in a factory or finding items in a dark warehouse with lights off—through Isaac Sim. Robots experienced millions of failures within this safe virtual world to accumulate data, thereby securing powerful robustness that is not flustered by unexpected variables in the real world. In other words, simulators have evolved beyond simple test tools into “data production bases” that complete robot intelligence.

“Quality over Quantity”… The Rise of Data Curation

The era of ‘Big Data,’ indiscriminately learning massive amounts of data, is over. The industry is now staking its survival on ‘Data Curation,’ which manages the purity and quality of data. This is because if noise or physically inaccurate data is mixed in, physical AI like robots or autonomous vehicles can cause fatal malfunctions.

NVIDIA’s physical AI foundation model, ‘Cosmos,’ unveiled at this CES, is a representative example symbolizing this shift. The secret that allowed Cosmos to simulate the exact same laws of physics (gravity, friction, fluid dynamics, etc.) as reality even in virtual space was not simply scraping vast videos from the internet, but strictly curating and learning only physically meaningful and quality-verified video data.

Through this, NVIDIA proved the Data-Centric philosophy that “AI performance is determined by the quality of learning data, not the size of the model.” Now, for successful AI adoption, establishing a curation pipeline that decides ‘what data to select and feed’ has become as essential as agonizing over model architecture.

CES 2026 left a clear message: The center of gravity for AI has completely shifted from cloud and software within monitors to physical systems like robots, automobiles, and factories.

- Action: Agentified systems have granted AI the power of execution,

- Physics: Simulations and world models have given it the wisdom to understand the real world, and

- Learning: Synthetic data and curation have become the foundation for sustainable growth.

Competitiveness after 2026 will not lie simply in possessing a Large Language Model (LLM). The metric for a company’s survival will be how reliably it can transplant this intelligence into actual robots, factories, and our living environments to “solve real-world problems.”

Comments

Posts that violate our policies may be removed without prior notice.